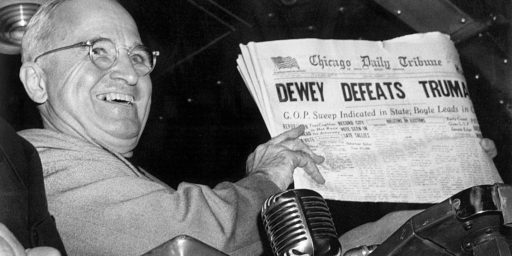

Math, Damned Math, and Statistics

How Obama can have a 75 percent chance of winning an election despite being essentially tied in the polls:

Jeff Leek, a biostatistics professor at Johns Hopkins, explains how it is that President Obama can have a 75 percent chance of winning an election despite being essentially tied in the polls:

Let’s pretend, just to make the example really simple, that if Obama gets greater than 50% of the vote, he will win the election. Obviously, Silver doesn’t ignore the electoral college and all the other complications, but it makes our example simpler. Then assume that based on averaging a bunch of polls we estimate that Obama is likely to get about 50.5% of the vote.

Now, we want to know what is the “percent chance” Obama will win, taking into account what we know. So let’s run a bunch of “simulated elections” where on average Obama gets 50.5% of the vote, but there is variability because we don’t have the exact number. Since we have a bunch of polls and we averaged them, we can get an estimate for how variable the 50.5% number is. The usual measure of variance is the standard deviation. Say we get a standard deviation of 1% for our estimate. That would be a pretty accurate number, but not totally unreasonable given the amount of polling data out there.

We can run 1,000 simulated elections like this in R* (a free software programming language, if you don’t know R, may I suggest Roger’s Computing for Data Analysis class?). Here is the code to do that. The last line of code calculates the percent of times, in our 1,000 simulated elections, that Obama wins. This is the number that Nate would report on his site. When I run the code, I get an Obama win 68% of the time (Obama gets greater than 50% of the vote). But if you run it again that number will vary a little, since we simulated elections.

A few things to note here:

1. While statisticians will run multiple simulations to arrive at their best estimate, we’ll run only one actual election. So, there’s a 1 in 4 chance of Romney winning even if all the polling going into these estimates is accurate.

2. We assume the polling is accurate because it has been for decades and it’s all we have to go on. But several trends (the rise of cell-only households, the advancement of call screening, the proliferation of polls and resulting onset of poll fatigues, etc.) could ultimately undermine the current model. We won’t know that until after the fact.

3. The Electoral College makes forecasting more tricky than it would be in the simplified example Leek points to. There are multiple states where the polling is close and the polling is less reliable at the state level.

via Chris Lawrence’s Facebook stream

Polling day before election has been accurate but a week out? Not even close. These samples assuming 2008 turnout are preposterous. Nevertheless it seems the left-wing pollsters PPP, QPOLL, NJ, CNN, MARIST, SUSA, etc are all in with the idea.

@BILL MITCHELL: Matter how much you keep making that claim, it doesn’t change the bottom-line reality. Pollsters are not waiting by party id, except Ras.

@BILL MITCHELL:

Beyond your belief in this idea… do you have any real recent proof of what you are claiming?

At best, if you are looking at an individual poll without any historical analysis there might be a bit of truth to this.

HOWEVER, when one looks at ALL POLLS ACROSS BROADER TIMELINES what you are writing simply isn’t true. Take Reagan/Carter 1980 – there’s a long held “legend” that Carter was leading Reagan after the debate. That was largely due to a single Gallup poll. As this chart demonstrates, the majority of polling, taken in aggregate, clearly put Reagan ahead in the national vote and he had a rather stable lead for quite a while.

Take another example — Bush/Kerry — for all the talk of Exit Polling, it’s clear that Bush was leading Kerry a week ahead of the election and there was no real fluctuation in the polls.

@BILL MITCHELL: I love that, within three sentences, you prove you lack historical knowlede, exhibit your own biases and prove that you don’t get how polling works. That’s some efficient self-debunkery, there.

It’s worse than I thought…….

Bill Mitchell: “Polling day before election has been accurate but a week out? Not even close.”

James Joyner: “We assume the polling is accurate because it has been for decades and it’s all we have to go on.”

My question for righties who are indulging in this poll revisionism: Are you worried at all about being ignored after the election?

Hate to say it, but the number of conspiracy theories* that are basically in the conservative mainstream really are having an effect on the right’s credibility.

* like a bunch of “left-wing pollsters” conspiring to juice the polls so it looks like a dead heat. Seriously? How stupid do you think I am, man?

@SKI: Quinnipiac does it too–they ask respondents to self-identify and weight the poll according to what they saw in 2008 turnout.

I don’t really have any problem with asking for party ID, nor do I think there’s any issue with “skewed polls” or any of the other stuff the “poll denialists” go on about. The only question I have–and I’ll throw this out to all the other OTB commenters, because maybe I’m missing something–is whether a 2008 turnout model is valid in 2012. 2008 was a very special year, a set of circumstances that won’t be repeated ever. Is it reasonable to expect Democrats to turn out as they did that year, or for Republicans not to?

Nate Silver now has it closer to a 1-in-5 chance for Romney (79% to 21%)…down from 1-in-4…and that has been the consistent trajectory of the polling.

The Princton Election Consortium shows almost no scenarios in which Romney wins…and has VA, NC, and FL as toss-ups…which is new for that site. VA has been the only toss-up for weeks until now. (The toss-up States are likely to change as the prediction is updated several times a day).

http://election.princeton.edu/history-of-electoral-votes-for-obama/

It’s also been pretty clear from events over the last few days that the Romney camp is getting really desperate.

I fail to see how anyone but a low-information voter would consider electing Romney. But there are a lot of low-information voters out there. And in here.

@Mikey:

No, they don’t. Quinnipiac:

“If a pollster weights by party ID, they are substituting their own judgment as to what the electorate is going to look like. It’s not scientific,” said Doug Schwartz, the director of the Quinnipiac University Polling Institute, which doesn’t weight its surveys by party identification.

http://www.theatlantic.com/politics/archive/2012/09/are-polls-skewed-too-heavily-against-republicans/262834/

And oh-by-the-way; A new version of the ADP Jobs Report, designed to align closer to the BLS Report, has private sector jobs at +158,000 in October. The prediction was for 135,000. Weekly jobs claims fell last week again.

We’ll know more tomorrow at 8:30am.

As@Mikey:

We won’t know what the 2012 electorate will look like for sure until after the election, but no pollster is showing an electorate that is going to look like 2008, or they’d be showing Obama ahead by 7 in the national polling.

The problem is that Republicans often don’t want to admit that there are a substantial number of people who used to call themselves Republicans but now call themselves independents. They’re still going to vote for Romney, but they aren’t counted as Republicans in the party ID information, which means there is a larger spread between Democrats and Republicans than those Republicans want to believe could be true. Assuming your question really means “Is it possible that Democrats could have the same party ID advantage as in 2008?” then yes, they could, even if more Republican voters go to the polls in 2012 than did in 2008.

Yglesias has an interesting post on the smallest geographically possible EV win.

http://www.slate.com/blogs/moneybox/2012/10/31/geographically_smallest_electoral_college_map.html

@KariQ:

Correct. And it’s been that way for quite a while. For those interested in looking at historical data to back up this point, check out this interactive graph from Pew:

http://www.people-press.org/2012/06/01/trend-in-party-identification-1939-2012/

You are very confused. I suggest you read one or two of the many endorsements of Romney by the increasing number of traditionally Democrat voters. They’ve done very detailed and calculated assessments.

Or how about these Obama voters?

I think this chart is better, since it’s a shorter time frame and includes more polls. Notice the near mirror image of the independent and Republican lines since the beginning of 2011.

@KariQ: Well, I read a transcript of an interview with Peter Brown, assistant director of polling at Quinnipiac, and he stated pretty specifically that they do ask respondents to self-identify: “We ask voters, or the people we interview, do they consider themselves a Democrat, a Republican, an independent or a member of a minor party. ”

So either the director and his assistant are in complete disagreement, or they’re talking about different things. Or they do something different with what they get in answers to party preference. But at least according to one of the guys in charge, they do ask.

@C. Clavin:

Yes, I’m much more comfortable with 1-in-5 than 1-in-4, and the trend is our friend.

@JKB:

It is actually disturbing that so many people want to be conservative so badly that they’ll support such an awful candidate. He’s an Etch-A-Sketch, in case you’ve forgotten, who will fake relief efforts to get elected.

Forget the polls, we have an even more scientific indicator:

Last night on The O’Rielly Factor, Dick Morris predicted that Romney in a 5 to 10 point landslide in the popular vote and 300+ in the electoral college.

Romney is sooooo screwed.

@Mikey:

I believe you misunderstood the quote you included. They ask voters how they self-identify, of course. But they do not weight the polls to reflect any particular party ID. They simply report what they find.

@Mikey:

Collecting information about party affiliation is a completely different thing that weighing by party affiliation.

@KariQ:

Of course it’s possible, but is it likely? Do Democrats have the same level of enthusiasm in 2012 that they did in 2008? Are they going to prioritize getting to the polls, as they did in 2008?

Well, anyway, the only real answer is the one we get next Tuesday. It’ll be interesting to see which polls proved most accurate.

@KariQ: You’re right, they don’t change anything in their result based on party ID, they just split the results based on it, so they’ll say “respondents were X% Democrat, Y% Republican, Z% independent.” And if it works out that it seems to “oversample” Democrats, it’s really irrelevant, because it’s just based on what the split was among respondents. They’re not saying “more people identified as Democrats, therefore Obama has an advantage.” They’re just reporting who’s in their sample.

@ JKB…

Yeah…I read your comments…you shouldn’t be accusing anyone of being confused.

@mattb: Yes, see my response to KariQ above.

@john personna:

Interesting on the etch-a-sketch comment. Whereas, many who voted for Obama in 2008 are pointing out how they, for some reason I can fathom, thought he was a moderate. Oh, and many of the staunch Democrats supporting Romney now are doing so because they cannot trust what Obama says on Israel.

So really it is down to voting for the self-serving, legislatively incompetent, who misled so many when he was elected four years ago and who has left good men to die by not ordering in supporting forces or a guy who’s changed his mind as he’s learned more during his campaign?

@Mikey:

As I said in my first response, no is expecting the 2012 election to look like the 2008. No one. No pollster is trying to model the 2008 election, no pollster is weighting their polls to look like that electorate. No one. None.

The race is clearly going to be closer this year than 2008. It is entirely possible that there will be fewer Democratic voters, yet the partisan ID breakdown will be unchanged because of the shift among Republicans to self-identifying as independents.

But you know, it doesn’t have to be 2008 all over again for Obama to win. He can still win while carrying fewer states and having a smaller percentage of the vote than he did 4 years ago. So the whole “it won’t look like 2008” argument is really beside the point. The question is will enough Obama supporters vote for him to win?

@JKB:

Ya know, I used to give your intelligence the benefit of a doubt, but that statement removes all doubt.

Standard deviation and variance are unequivocally _not_ the same thing. I know the author was using casual language in that statement but given that variance is well-defined within the context of statistics it is a poor place to use colloquial vernacular. A better reading would undoubtedly be, “The usual measure of dispersion is the standard deviation.”

@john personna:

About Romney’s, ahem, relief efforts, Matt Yglesias has another one:

If Mitt Romney Wants To Help Hurricane Victims, He Should Donate Some Of His Vast Fortune

Cheap shot? Maybe.

But he has a point.

Ok, and JKB:

Cute trick, but no questions on healthcare or abortion or gay rights? It’s all Patriot Act and drones?

Look, I know we should all vote for Gary Johnson this round, but c’mon…….no one else –not McCain, not Romney, and certainly not Obama– would roll any of that hawkish war on terror stuff back. No one.

If Romney wins, it will prove nothing. The forecasting models say there’s a possibility he will. And life will go on.

If Obama wins, though, it should shut some people up. Particularly those that don’t believe in fancy things like numbers and evidence. But it won’t: they’ll claim the vote was rigged, or its because of voter fraud, or they’ll blame it on too many Mexicans. Anything to pretend that reality is different than what it is.

P.S. I see that Nate Silver offered to bet Joe Scarborough over the results of the presidential election. This actually makes me think a little less of him. While he was simply defending his statistical model, it makes it appear that his model and his preferences are not appropriately separated.

@JKB:

I think that’s called bringing the crazy.

US death toll in Libya: 4

US death toll in Iraq/Afghanistan: 6630

Now why, rationally, would Libya be your focus for US military policy?

@Franklin:

People bet with models all the time, sports and finances.

@Franklin:

@Mikey:

They’re not saying “more people identified as Democrats, therefore Obama has an advantage.” They’re just reporting who’s in their sample.

Yes, and this is where people often fundamentally misunderstand how most polls work. Party ID is a response from those polled, just like their responses to whom they will vote for.

When partisans scream about skewed polls, they are not actually complaining about polling methods, whether they know it or not. They are complaining about the results.

Never mind….used the google. Fabulous, that thing…..

It was a Tweet. And with the charity angle….pretty Trump-like.

Silver has upped Obama’s chances to 79%, with 300 electoral votes. Maybe it’s not his political preference he’s expressing but confidence in his model.

@Herb: It was a tweet this morning after Joe spoke negatively about him (again) on this morning’s show.

@john personna:

Well, there we go. The Progressive math. What’s a dozen or so lives of Americans conducting America’s business in a country Obama bombed so that it could become a haven for Islamic terrorists? Especially, compared to taking action reflect badly on Obama’s re-election. Or might cause Obama not to get a good nights sleep before flying off to Vegas for some cash.

Nope, it’s all about the body count. Cold, calculating numbers. Statistics. Welcome to Robert McNamara’s Democratic party. War by MBA math.

@Herb: I actually agree that he’s defending his model; I’m just saying perhaps the appearances don’t look good. While he has never hid from the fact that he’s liberal, the *appearance* is that he’s entwining the two with this bet. Even though I don’t think he is.

I think the discussion misses the issue raised. To give an example, today Nate Silver is predicting Obama will get 51.0 % of the votes cast in New Hampshire with a margin of error of plus or minus 4.1%. That is, after aggregating the polls and using his special sauce of weighing polls and state effects, all he can tell us with confidence is that somewhere between 46.9% and 55.1% of likely voters support Obama.

I believe he takes the next step by considering every increment btw/ 46.9% and 55.1% as a random event. But they are not random events, the “likely voter” in New Hampshire is an existent fact. We just don’t know what that fact is with a high degree of specificity. This is not like baseball, where we have a plethora of established statistics about the players and the stadiums, and we attempt to predict future events with highly random occurrences (the ball finds a hole). The only thing random about the “likely voter” is whether he/she votes by Tuesday for his/her preferred candidate (not hospitalized and not marking the wrong candidate), but we are only concerned to the extent the failure to vote is disproportionate to one side.

Which is a long way of arguing that the numeric conclusions Silver draws as to the ultimate chance of winning are not helpful. It projects more certainty than the underlying data can support. Its better to look at his individual state aggregations, which I think are more useful than RealClearPolitics aggregations.

@ JKB…

Like I said…confused.

The loss of life, including Ambassador Stevens, is tragic.

What you omit in order to spew Partisan BS is that tens of thousands of Libyans then marched in support of the US and against the extremeist militias that were responsible.

Of course that undercuts your argument.

So why mention it, right?

Grifter gotta grift.

@john personna: A fair point.

I guess with all these attacks on his model, I would have preferred him to stay above the fray. When the results are out, the accuracy of the model will be demonstrated.

@PD Shaw:

That’s pretty much the definition of random probability, actually. Once I throw a die, how it will land is a fact, we just don’t know what it is yet.

@JKB:

Dude. Why is it that, in the bubble, you guys think Libya was lost?

BASF bolstered by Libyan oil, pesticides

BP says commits to Libyan exploration campaign

Italy’s Small And Medium Size Businesses Unite To Help Libya Reconstruct

You seriously live in a fantasy world.

@Franklin:

I agree. But then again, it’s too late for that. A whole pack of dogs have already been trained to attack him.

It’s pretty low, I think. Because, as you say, “the accuracy of the model will be demonstrated. ” And soon.

@Franklin:

Technically no, an odds model can’t be proven or dis by a single run. You know, just as it takes a hundred coin flips to prove the odds at 50:50

There are lots of neat experiments with black and white beads in a jar, pulling them out and showing to a human observer. It is really hard for us to grasp true odds even in that case, and give up the “recent-cy” of the last few beads.

There is a great book on this, Against the Gods, so called because until really recently humans didn’t even believe in odds. It was all fate, until a couple hundred years ago.

(I’m sure Nate understands that he could lose his bet, but just that it’s more likely that Joe will be making the charitable contribution, rather than he.)

@PD Shaw:

I think the only way you’d have an existent fact would be if everyone had voted and results were sealed.

Until then we do have a dynamically changing population of voter impulses, which we only see imperfectly.

Is there a better model than “random” for that ongoing change in discoverable intent?

@JKB:

I’ll renew the challenge I’ve thrown out in a couple of comment threads, so far without response. What has Obama done that is to the left of Richard Nixon?

Rule -Done, or made serious attempt to do. Not all the stuff that as a conservative you just “know” he wants to do.

@Mikey:

This is not correct at all. 2008 was only incrementally different from 2004, and it was 2004 that saw the reversal of decades of turnout declines.

@Rick Almeida: There will never be another first time we could elect an African-American President.

@Mikey:

That observation does not necessarily translate into any empirical outcomes.

MIkey: you think that helped Obama significantly? I think it likely helped goose Dem turnout a little. How much, though?

@john personna:

Well, it might be because Obama’s Secretary of State is in Algeria trying to start a war in Mali

But thanks for the links that demonstrate that, we the American taxpayer, paid for a bombing campaign to get feckless Europeans better oil, natural gas and construction contracts.

@C. Clavin: “tens of thousands of Libyans then marched in support of the US and against the extremeist militias that were responsible.”

The Americans in Benghazi didn’t need a march, they needed air support. Air support and reinforcement/extraction that only the President of the United States could order. Instead, the Commander in Chief of all U.S. military forces wen to bed, leaving them to die. Barack Obama is unfit for command.

@john personna:

I’m not sure which comment of mine you’re referring to. If it’s my disagreement with PD Shaw’s point, I’m simply saying that probability is nothing more than a fancy way of saying what we think is going to happen based on our current information. Said another way:

PD said that the “likely voter” isn’t a random probability, it’s a fact (but one that we don’t know yet). And all I am saying is: an observer’s expectation of what the fact is *by definition* makes it a random probability to the observer.

It’s a semantic argument, but it seems relevant to his point. PD appears to believe the likely voter model is unsupported by the data, which I believe Silver would disagree with based on historical data and a bunch of other information. If that’s not what PD is saying, then I’ll just stick to my semantic argument only.

@Franklin: We can say that the correct measurement is randomly distributed across the interval; I won’t because I think an informed observer can reasonably conclude things about the probable outcomes in most of these states in ways that are not quantifiable. My issue is that it is not useful and perhaps misleading to take the additional steps to try to quantify something if its merely meant to quantify random variance.

And the role of the die analogy is not helpful to me. I would never place a bet or assume anything based upon a single role of the die. I will be willing to assume things if we roll the die 100 times.

@JKB:

OIl is: (a) fungible, (b) magic

@PD Shaw:

If someone were to offer you 100:1 payout on a 6:1 roll, you’d be a fool not to take it. Odds do tell us something about likelihood even if we cannot confirm the odds with one roll.

Look, voters fall in three broad camps: decided-R, undecided, and decided-O. None of those are stable, people migrate at the margins. We are seeing migration now, as the trend-line moves.

Nate didn’t come to this as a babe. He has past data about the nature of voter stickiness and migration, as well as the variability of who actually shows up at the poll.

What you seem to be saying, without data, is that your mental imaging trumps his data series.

@Rick Almeida: You’re right, it doesn’t. But that doesn’t mean it didn’t, in this particular instance. I consider it a very important factor, and I’m sure you recall at the time it was seen as quite significant, transformative even. I don’t recall ever seeing that level of enthusiasm for any Presidential candidate, but I only remember as far back as Reagan.

Seems to me it wouldn’t be that hard to figure out how it affected Democrat voter turnout. The data are available. I should take a look.

@Rob in CT: Good question. Since I’m the one who thinks it did, it’s on me to research the data that are available. If it’s slow in the office this afternoon, I’ll do that.

@Herb:

A lot of people just don’t get statistics. When Nate Silver says “Obama by 300” he’s not actually saying Obama is going to win the election with 300 electoral votes. He’s saying there’s a 50% chance he’ll have more than 300 votes and a 50% chance he’ll have less.

Given that there’s a lot of big electoral vote states (Ohio, Florida, Virginia, etc.) that are very close, and that they’re all probably going to end up breaking the same way in the end, this seems a perfectly reasonable statement to me. And it remains a reasonable statement even if Romney ends up wining. Much as if you ask someone to predict how much they’re going to get rolling a six-sided die, an answer of 3.5 is reasonable even if they end up rolling a 1.

Nate Silver’s current confidence interval is +/- 58 electoral votes. So he’s saying there’s a 95% chance Obama gets between 242 and 358 electoral votes. The 300 number is the expected value, which is just an over/under number. There’s a 47.5% chance Obama gets 242-300 and a 47.5% chance he gets 300-358.

Which isn’t as outrageous a statement as the Republicans might like. Again, if you recognize that all the close states are probably are going to end up breaking mostly for Romney or mostly for Obama, those two scenarios are equally likely.

Two things. First, Silver (and probably some of the others) adjust for the no-cell problem. Second, Silver shows that, in the years since there has been a great growth in the number of state polls, projecting the national vote result from them has been more accurate than the national polls themselves (see his post on the latter point from Oct. 31).

(And, rather obviously, if the state polls better project the national vote than do the national polls, they also better project the electoral college.)

@Stormy Dragon:

One of the interesting things about the Against the Gods book was that statistics and odds were invented by gamblers looking for an edge. They have best application there, in constrained systems. We don’t need to worry about a 6 sided dice growing a side or losing one.

Taleb carries on from there and notes that rules about constrained systems do not map exactly to our unconstrained world. There are Black Swan events. Mr. Obama or Mr. Romney might still be filmed kicking a blind person.

But, baseball isn’t constrained either, and Sabermetrics has worked there, so I’ll give the political equivalent some merit.

@Rob in CT, @Rick Almeida: Looking at a report of research done at the Population Studies Center of the University of Michigan (Go Blue!). Based on the changes in voting patterns between 2004 and 2008, the research concludes Obama’s race likely won him the following: Nevada, New Mexico, Virginia, North Carolina, Florida, Indiana, and Ohio. Even winning those states wouldn’t have gotten McCain the win–he’d have ended up at 269 EVs. But it does indicate Obama’s historic candidacy strongly influenced voting patterns in a way nothing had before.

Here’s a link to the report. I’ll look for others, as they may reach a different conclusion.

How Did Race Affect the 2008 Presidential Election?

I also found a list of factors that made the 2008 election unusual, from 270toWin.com:

There were several unique aspects of the 2008 election. The election was the first in which an African American was elected President. It was also the first time two sitting senators ran against each other. The 2008 election was the first in 56 years in which neither an incumbent president nor a vice president ran — Bush was constitutionally limited from seeking a third term by the Twenty-second Amendment; Vice President Dick Cheney chose not to seek the presidency. It was also the first time the Republican Party nominated a woman for Vice President (Sarah Palin, then-Governor of Alaska). Additionally, it was the first election in which both major parties nominated candidates who were born outside of the contiguous United States. Voter turnout for the 2008 election was the highest in at least 40 years.

@PD Shaw:

You appear to be confusing 2 very different sorts of uncertainty.

The uncertainty in the likely voter estimate is based on the representative nature of the sample, not the unpredictable behavior of a likely voter. In a coin flip with a balanced coin there is a 50% possibility of heads on any given toss, and based on that you can calculate the probability of getting 50 out of 100 tosses or of getting some other results. It is quite unlikely that 100 tosses would actually result in 50:50 split, but a high probability of getting close, and the looser your definition of close, the higher the probability. Likely voter polling models assume that the poll respondent will do exactly as they say, but they don’t ask all eligible voters, only a sample. And statistical theory allows you to calculate the probability that the result in the entire population is within any given range of the result in the sample. This is what all of the pollsters and analysts are working with. The margin of error is the range that gives a 90 or 95% probability of including the entire population result, but you can measure the probability of any range. The larger the range, the higher the probability. Since winning the election is the important issue you calculate the probability that the true result is anything greater than 50%. This is what Silver is doing. Rather than treating increments between 46 and 55% as random events, he is calculating the probability (based on the same known characteristics of the polling that gives the 4.1% margin of error) of the true result being between 50+% and 100%. He is not projecting more certainty than the underlying data can support. The problem is that people aren’t really very good at relating to uncertainty. When I see a weather report that says 70% chance of rain, I expect it to rain. When I see a weather report that says 30% chance of rain I think I better prepare for rain. Mathematically this is unreasonable but practically is makes sense because in general erring on the side of preparing for rain is safer than the reverse. The amount of uncertainty still there in Silvers numbers should make anyone understand that either result could still easily occur, and if there is anything you need to do to avert or prepare for the less likely event, do it.

@Mikey:

I don’t think the paper can be read as “Obama’s race likely won him the following: Nevada, New Mexico, Virginia, North Carolina, Florida, Indiana, and Ohio.”, as there’s no reason to think those states would all have voted for McCain over a generic Democrat.

@David M: Well, that was the author’s conclusion, not mine. And you’re correct, those states may not have voted for McCain anyway, and in fact another section of the paper talks about how turnout among non-minorities was lower, possibly because of Republican dissatisfaction with McCain. So even if Obama hadn’t run, enough Republicans may have stayed home in those states that McCain wouldn’t have won them.

But it pretty clearly shows minority voters turned out at a level much higher than they did in 2004, and that their party identification as Democrat increased significantly as well.

But I don’t think that “won the election for Obama,” not least because even if McCain had won the seven states the research named, he still would have lost, and again, I agree with you that there was no guarantee McCain would have won them if another Democrat had been running–especially since the “other Democrat” would probably have been Hillary Clinton.

@DRE: Additional point. The uncertainty reported by pollsters and analysts like Silver is not based on the probability of how the election turns out, as in if we had 100 elections with today’s conditions, in what percent would Obama win. The uncertainty is based on the likelihood that pollsters have randomly drawn a non-representative sample, as in if we drew 100 random samples from the real world today, what percentage would be unrepresentative enough to predict the wrong winner.

JKB never responded to my comment last night, so I am giving it another shot:

@Mikey:

Thanks for responding. That strikes me as overselling things a bit, but hey, I didn’t do the research.

@Rob in CT: The researcher seemed pretty confident that the upsurge in minority turnout wasn’t part of a general increase overall, that it was due to Obama’s candidacy.

Still, I was a bit surprised when I went to 270toWin.com and figured out even if Obama hadn’t won the seven states the researcher figured Obama got due to minority turnout, McCain would still have lost, although by the slimmest margin–he’d have gotten to 269.

And as I told David M, it’s certainly not a sure thing McCain would have won those states against any Democrat, and certainly not against the next most likely opponent, Mrs. Clinton.

Well right, with Clinton perhaps you get a comparable “first female POTUS” boost. Hard to say.

@DRE: I’m not confused by that two different points of uncertainty, but I may not have been clear. My intent wast to specifically ignore one type of uncertainty, leaving only once of concern.

I think the outcome of the election is an existent fact as of this date. The current preferences of the likely voter will be the outcome next week. Any intervening uncertainties are too small. The cat in the box is either dead or alive; its possible that the cat’s condition might alter between when I guess which and when I open the box, but the likelihood is so relatively small that it should be ignored.

I predict that Nate SIlver will be correct on his state-by-state predictions (all states will have results next week that are within the margin of error). And if I’m wrong Silver won’t be alone, he’s simply aggregating other people’s work.

My issue is with the next step in which Silver takes the state-by-state predictions, and its my understanding he uses sports/ betting related simulations that convert the states into weighted coin flips. I think that’s a step too far, and I am not aware of anybody else doing it. State based prediction models typically report their results in terms of predicted electoral votes.

I am a consumer of sabermetrics analysis; I understand the utility of predicting a player will hit the ball at least once given four repetitions. What clarity or insight do I gain thinking of the election that way, particularly when he has better information on a state-by-state basis?

@Franklin: It may just be confidence in his models. Getting 50/50 odds on a bet that you think is closer to 4:1 is a pretty good deal. Nate is, after all, an avid poker player; betting mispriced odds may be reflexive. : )

Ok I get that I’m hauling this all the way back from comment #25 or whatever

But seriously, find me ONE “staunch Democrat” who is voting Romney because they don’t “trust what Obama says on Israel”

This person is imagninary

@PD Shaw:

The problem is that you seem to be treating the margin of error as more than it is, and treating everything within it as equally probable. The margin of error is just an arbitrary definition of a certain level of probability, but it is based on a probability distribution which has a much higher density near the point estimate. If you are looking at a large number of individual contests, and you only look at whether 50% is within the margin of error, you are ignoring a lot of information. If 50% falls within the margin of error you still know something about the probability of a correct estimate based on the distance between the estimate and 50%. If a poll gives Obama a 2 point lead with a 4 point margin of error, that is a very different result than one in which Romney has 2 point lead. Neither result has a lead outside the margin of error, but the probability of getting one or the other poll result if Obama is actually winning the state is very different. In a purely statistical model, with assumption that we only have sampling error you could treat all of the probabilities as independent, but Silver does make allowances for systematic error or bias beyond the sampling error. He goes farther than most analysts in trying to quantify some additional error, but that increase the uncertainty he finds. But the simulations he runs are basically an attempt to measure the probability of outcomes rather than compute the cumulative probabilities of 2 to the 50th power possible outcomes. You could do the same thing with a coin flip. Given a fair coin you can calculate the probability of getting more that 30 heads from 50 tosses. But if you were to repeat the 50 tosses 1000 times, and count how many of them produced more than 30 heads it would probably give you a pretty good approximation of the probability. This is not necessary because it is easy to calculate. However if you have 50 different coins and they have different values and different levels of unfairness (either heads or tails more likely), and you flip each one and want to know the probability of getting more than $2.70 worth of heads, the calculation is difficult but a repeated trial or simulation is pretty easy. After you had done the trials or simulations you would know a lot more about the likelihood of getting at least $2.70 worth of heads than you knew from just looking at the values of the individual coins and whether each one was more likely to produce heads or tails or was fair.

@DRE:

Silver has a lot of data up, but “Chances of Winning” should mean X percent of 100 elections.

@PD Shaw:

If he is building up from state outcomes, they are independent. Simulation is the only way to combine them to an electoral total. If you simply take the odds favorite in every case, as a sum, you take the unlikely single path that your simulation is perfect in every instance.

@DRE:

Sorry, typing as you were. Your answer is much more detailed.

@john personna: Sometimes I get carried away.

@DRE:

In the end it amounts to the same thing, but my point was the uncertainty comes from sampling or other polling error, not from any potential randomness in voter behavior.

@DRE:

I’m sure you have the most direct path to modeling. I started treating it as a real black box because given a closed formula, I have no idea how many secondary modules there are.

For instance, if a “likely voter” is “undecided” at this point I’d like to know their historic record for actually showing up. I really suspect that a lot of “undecideds” a week out will be no-shows.

@ JKB…

“…The Americans in Benghazi didn’t need a march, they needed air support. Air support and reinforcement/extraction that only the President of the United States could order…”

Your partisan bullshit benefits from 20/20 hindsight.

Congratulations.

Have you applied the same vision to 9/11, or the 9% in one month Bush Contraction?

My guess is no.

Also…my guess is it could have been ordered by others if deemed necessary…without the benefit of your marvelous 20/20 hindsight….so essentially you are just full of crap all around.

Low information voters like you are dangerous.

Of course I am only assuming you vote…the low information part is self-evident.

Okay a bit late, but I’ll toss this in anyways…..

None of that (in the OP) makes Silver’s analysis objective. Statistical analysis is, IMO, never objective. Any statistical model is going to have some sort of subjectivity attached to it. For example, if you are constructing a model that has a number of different variables in it, then which variables you select for inclusion is a subjective decision. The possible functional form is also subjective. How to handle/clean problem data points is also subjective (do you dummy them out, “clean the data” some how, etc.).

Of course, subjectivity in a statistical model does not render it nonsense either and AFAIK Silver’s models are not fully explicated to the public….so taking his analysis with a grain of salt is advised….as you should do with any and all statistical models.

@john personna:

No, they’re not independent. If something causes Obama to start doing better in one state, there’s a good chance it will cause him to do better in other states. In the end, most of the close states are going to end up breaking one way or the other, so the electroal college vote is unlikely to be as close as the popular vote suggests.

@JKB: , anyone?

Waiting.

@gVOR08: @anjin-san:

@Steve Verdon:

You might be alluding to the difference between simple and constrained systems, as I discussed above, and fuzzier human systems. Statistics were invented for simple systems without human involvement or volition. Coin flips, beads in a shaken jar, roulette wheels, etc. All of those can be modeled objectively.

The question might be, as you make the transition to unconstrained systems, especially systems with animal actors, how you treat them. In the most straightforward simplification, as many have described above, you just assume that voters have a fixed internal state, their future-vote, and you collect that the best you can. Assuming random sampling (possibly hard) you can do an objective analysis.

Of course, humans do not have fixed internal states, and so that simplification could bite you in the ass.

@Stormy Dragon:

As I describe above, if you are seeking a fixed internal state (a simplification) then the states can be measured independently. It would only be some alternate, weird, AI model that would do otherwise … say by trying to determine a trend in sentiment or something.

(When I look at Silver’s data I do a momentum based analysis, myself. I am comforted both by Obama’s strength, and the direction of change. I don’t believe, looking at the history of the campaign, that popularity is a random walk. It trends.)

@Steve Verdon:

Okay a bit late, but I’ll toss this in anyways…..

I’d say it is welcome, as it is all true and important.

Statistical analysis should not strive for, nor can achieve, objectivity. What people should look for is a track record of accuracy and an ability to adjust to changing conditions.

Silver is fairly new to the game, but has done well so far. He is well-known because like in baseball statistics he is able to present data in a way that makes it quite understandable to smart people who don’t know a ton about statistics. That and being in the right place at the right time. Because he has become “famous” (among a certain group, anyway), he is also a target of scorn among those who don’t like what he says the polls are telling us. None of these people had a problem with Silver in 2010 when he predicting big Republican pickups across the board. Now in 2012 he is predicting wins for Democrats, so he’s evil and must be destroyed.

But he’s just a guy deciding which numbers are more important than others. If his analysis is an accurate reflection of the electorate is unknown at this time. His track record is short, but good.

@mantis:

There are worse, harder systems. Consider the difference between an Oct 1st prediction of the Nov 6th election result, and a Oct 1st prediction of the Nov 6th gasoline price.

Yes, Americans go into harms way for their country. Some of them die. But we don’t abandon them to the enemy when they are under attack. This attack went on for 7 hours. Plenty of time to bring aircraft and even a fast response platoon on-site. Yet, the Commander in Chief went to bed and the CounterTerrorism Security Group was not convened.

It is not the attack that is significant. It is the abandonment of those under fire by the Commander in Chief.

@john personna:

Question: Who out here wants low gas prices and who out here wants a good economy? Anyone who says “BOTH!” can just hit the edit button as it ain’t gonna happen.

@OzarkHillbilly:

I was just picking something notoriously unpredictable. Gas and oil prices are very volatile, and narrow prediction is a guess.

@JKB:

According to the WSJ, Libyan government reinforcements arrived in about 30 minutes. US reinforcements from Tripoli within about 4 hours.

Someone was doing the best they could there, on the ground, in Libya, with the fog of war.

Would you really want a President Romney to sweep them aside and try to micromanage that kind of thing from Washington? Maybe with a Bat Signal?

@john personna:

When I said they’re not independent, I was talking in the technical sense (e.g. P(Obama wins Ohio and Florida) =/= P(Obama wins Ohio) P(Obama wins Florida)) This is either the case or it isn’t. And if not the case, but Silver is assuming it anyways, then all his calculations are incorrect.

@Stormy Dragon:

Again, you are speaking of a different modeling path than we think he has. He has not published of course. But if he is modeling “internal state” you can throw away all worries about how people got there. They are decided voters.

Bob’s process in Colorado may have been similar to Jill’s process in Florida, but they decided independently in the past. Their process is no longer relevant.

You guys know Romney is going to win, right?

@Stormy Dragon:

There are two parts that are different. The probability of random sampling error is independent. The probabilities of other types of polling errors or of there being a swing at the last minute are not independent. Silver models both parts and treats them separately in constructing the simulations. You have to remember what we are talking about. A simple aggregation of state polls shows Obama winning. The probability we are talking about is the probability that they are correct.

@Drew:

Yes Drew, we all know Romney is going to win in a landslide. But we’re pretending that Obama has a chance so we don’t depress Democratic turnout. If we’re lucky we may keep the Republicans from getting 60 seats in the senate.

@ JP..

With the Bat Signal…that’s funny shit, guy.

Is JKB really JTea???

Well, that is certainly the first time in history a President has gone to bed while a battle is going on. Traditionally, they just do a lot of benzedrine and stay awake as long as it takes. That was a real bitch during Khe Sanh.

You do know, don’t you, that as Commander in Chief Obama can tell his many subordinates to do what they need to do to achieve a desired outcome – he does not have to personally direct operations and micromanage – in fact, a President who does that is almost certainly doing more harm than good.

You guys should work on getting your story synched up. There is “he went to bed” and “they asked for help and he refused” and “he watched them die from the situation room and did nothing”. No doubt this will provide fodder for crazy uncle emails for some time to come.

Sometimes things just go to shit. Carter had Desert One, Reagan had the Marine barracks, and so on.

@JKB:

Wow. You pretty much nailed us liberals there.

If there’s one thing that describes liberals, its hard edged, steely eyed calculating hawks.

But really, liberals are the Very Serious People who balance budgets, make war and protect women, children, and conservatives from the Bad Men.

I guess its true, that if a person isn’t a conservative at 18, he has no heart, but if he is still a conservative at 30, he has no brain.

I’m 53. The last time Robert McNamara was an important figure in defense policy, I was in the third grade.

@C. Clavin: No, Jaytea is a troll who will say anything to annoy people. JKB and Drew really believe the stuff they post here. You may decide which is sadder.

You really are a moron. Desert One went to crap in a few minutes. It took only seconds for the truck to penetrate the marine perimeter in Lebanon.

The Benghazi attack lasted for hours. The President did not order assets deployed.

@john personna:

Well, as the attack lasted for 7 hours, with two of the deaths occurring near the end, Obama should have sent more assets. All that was really needed was a gunship. They had real time intel and laser targeting, a couple miniguns in the sky would have resolved quite a few of the attackers.

But the events are correlated.

@JKB:

So you’re giving up on the cover-up crap and are now just second-guessing the government’s response?

That’s a slight improvement, I guess, but what insights do you expect us to glean from that other than the old “Hindsight is 20/20” maxim?

Conflicting initial reports indicates this was a protest and you wanted a gunship? Gimme a break…..

I see. And these events took place in a vacuum. In Lebanon, the rules of engagement were very questionable. Sentries were ordered to keep their weapons at condition four (no magazine inserted and no rounds in the chamber). By the time the two sentries were able to engage, the truck was already heading towards the building’s entry way, armed.

And of course there is the “should we have really been there” question. And the decision to provide naval gunfire support, which left many in Lebanon feeling that we were part of the war, not part of the solution.

So the US took terrible casualties on a questionable mission, and we ended up leaving Lebanon with our tail between our legs. And President Reagan had nothing to do with it? Of course he did. But you are giving him a pass, because he played for your team.

@JKB:

So assuming we should have ordered an AC-130 gunship to open fire, how many Libyan’s were you hoping would die? Make sure to include women and children.

@ JKB

BTW, anyone who claims that the dead hand of Robert McNamara is somehow at work in today’s Democratic Party should be very careful about throwing the word “moron” around. It might just become a Boomerang. McNamara’s memory is more or less universally despised in Democratic politics.

What will you be telling us next, that Obama is channeling Louis Howe?

@JKB:

Don’t gunships, in battles with insurgents, require trained observers with communications gear?

Are you seriously suggesting that you send a gunship to a place with bad militia on good militia violence, just with orders to “shoot bad guys?”

I think you are. This is the level of your criticism. Send the AF to shoot who looks suspicious from 2000 feet.

I for one am glad that Drew is not reduced to cryptic one-liners.

I’m very impressed by JKB’s skill as an armchair warrior. Deploying assets, lighting up the bad guys with lasers, breaking out the miniguns, resolving attackers – Thank God America has this kind of iron in it’s spine.