What Are We Supposed To Think About These New Online Polls?

The 2016 election cycle is seeing "scientific" online polling become more prominent, but it's unclear just how reliable it is.

The 2016 campaign season has seen the rise of a new type of polling, but it’s not clear how reliable it actually is. Led by polling/press combinations such as Reuters and Ipsos, The Economist and YouGov, and NBC News, which has recently begun teaming up with the online polling first SurveyMonkey when not conducting its regular poll with The Wall Street Journal, voters, pundits, and candidates are being asked to evaluate the meaning of polls that are conducted using online panels that, unlike the traditional “online” polls that many of us are familiar with, are said to scientifically sort their participants to eliminate problems such as self-selection, the ability to flood a website with responses in support of one candidate, or the general issue of unrepresentative samples.

As Nate Cohn notes at The New York Times, though, it’s not at all clear that the product that these new polls are producing is something we ought to be relying upon:

According to the Pollster database of Republican primary polls, there have been nearly as many Internet surveys as there have been traditional, live-interview telephone surveys, 90 versus 96. By this time in 2011, opinion researchers had conducted just 26 online surveys and more than 100 live-interview polls.

Well-known companies, as well as smaller start-ups, are doing online polling. Every day, Reuters/Ipsos publishes a new iteration of its online tracking poll — the only tracking poll, telephone or Internet, of this election cycle. Morning Consult, an organization that didn’t even exist in 2012, now publishes a weekly poll. SurveyMonkey, despite its seemingly unserious name, has conducted election polls for NBC News, The Wall Street Journal and The Los Angeles Times. The Wall Street Journal sponsored a post-debate survey from Google Consumer Surveys. CBS News conducts surveys with YouGov, an Internet market research firm based in Britain.

The abundance of Internet-based polling reflects the extent that it has become easier over the last decade. According to Pew Research, 87 percent of Americans now use the Internet, so an online survey can cover most of the population.

But big challenges remain. Random sampling is at the heart of scientific polling, and there’s no way to randomly contact people on the Internet in the same way that telephone polls can randomly dial telephone numbers. Internet pollsters obtain their samples through other means, without the theoretical benefits of random sampling. To compensate, they sometimes rely on extensive statistical modeling.

As Cohn notes, not all of the companies conducting online polls operate in the same manner:

Reuters has the most traditional approach: It recruited its panel from a traditional telephone and mail survey. YouGov and Morning Consult use panels recruited from a variety of sources on the Internet. Google entices people to take a poll. SurveyMonkey has the most novel approach: It turns to the millions of people who participate in any number of SurveyMonkey’s unscientific polls on other subjects — the kind you can make yourself — and adds questions about politics.

The statistical techniques that the Internet pollsters then use to adjust these data vary nearly as much.

(…)

The fact that online pollsters have sometimes relied on extensive modeling to adjust their results could be a sign of the limitations of online polling. The challenge is even worse during primary season, when the statistical tools used in the general election don’t work nearly as well.

Some online pollsters, for instance, adjust their sample to match the ideology and party identification of voters, which are two extremely strong predictors of how people will vote in a general election. If you can nail the balance of Democrats and Republicans, liberals and conservatives, whites and nonwhites, you can probably get the right result. A group of political scientists used this exact technique to poll the 2012 presidential election using data collected from Xbox.

But this heavy-handed technique isn’t as useful in a primary. Nailing the right number of Democrats and Republicans may help a general election poll, but there’s no similar question that helps make sure a poll has the right number of voters for Mr. Trump. If weighting by partisanship is necessary in a general election, one wonders how much quality declines in a primary, without the same crutch.

One thing that is notable about the online polls, and something that may indicate just how reliable they actually are, is the extent to which they are inconsistent with other polling. While it’s not reasonable to expect that every poll will provide the same level of accuracy, or the same results, it does some reasonable to expect that polling would be relatively consistent within a certain range and that their general trends would be consistent. That’s why polls that tend to be wildly off the mark from what other polling is showing should generally be viewed skeptically regardless of what source is coming from. Even the most reliable polling company can end up with a bad sample at some point and produce a result that ends up being an outlier, and we can point to several examples of these kinds of poll results over the past several election results. In the case of the online polls, while the results have not generally been wildly different from more traditional polling, one notable difference is the fact that they have tended to be more favorable to Donald Trump, as The Washington Post’s Philip Bump notes all the way back in October. For example, he’s a chart comparing Trump’s performance in online polling as compared to traditional live phone call polling:

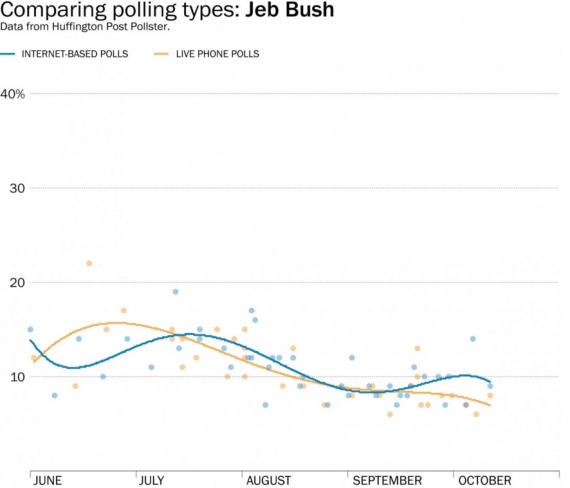

A cursory look at the numbers since mid-October shows that this disparity between online polling and traditional polling has continued, and, as Bump noted in his October piece, does not appear to exist for other candidates. Just look at a similar chart for Jeb Bush:

So what explains the disparity that seems to exist only for Trump when it comes to online versus traditional polling? Bump and others who have looked at the issue have theorized that at least part of it may be the fact that respondents are more comfortable admitting their support for a controversial candidate like Trump when responding online than they would be when talking to a live human being. While that sounds plausible, it doesn’t necessarily hold water when you consider the fact that, based on the data available from Pollster, Trump also appears to do significantly better in online-only polling than he does in automated phone polling in which respondents are merely being asked to press buttons on their phone to respond to questions rather than talk to a human being. It is true that Trump does better in those automated polls than he does in live-caller polling, but if the answer to the disparity noted above were simply the idea that people are more willing to admit their support for a candidate like Trump when they aren’t talking to a live human being then one would expect to see the Internet and automated polling with less of a disparity than actually exists. That leaves open the idea that the samples the online companies are using are tainted in some way, and that the methods they’re using to adjust them aren’t compensating for that. Whatever the explanation, it’s a disparity worth noting when evaluating this new method of pol

At this point, it’s hard to say whether or not these new polling techniques, and the methods that online pollsters use to try to adjust their samples and results to reflect the electorate that they’re trying to measure, are reflective of public opinion or merely a reflection of the opinions of a statistically adjust group of people who answered questions online. We’ve seen for years now that even the more traditional methods of polling can have issues depending upon how they are conducted and the assumptions that they make about voters that impact their accuracy and their results to a significant degree. One of the classic examples of that which has caused traditional pollsters to have to find ways to adjust quickly, of course, has been the fact that traditional polling that relied on random calls to landline phone numbers was missing out on a growing segment of the population, including most importantly younger voters. Polling companies have made efforts to compensate for this, but questions still remain about just how effective they have been. In the case of these online polling, we’re dealing with a completely new method of polling as far as politics is concerned, and one that doesn’t have the best reputation for objectively to begin with. Until we start seeing actual results from the election booth to compare to poll numbers, we won’t have any idea at all what to think of these numbers.

You can also throw the results of an online poll if you’re willing to take the trouble to use different computers with different IP numbers, or cart your laptop around to various WiFi hotspots and vote from those.

@CSK: It’s much easier than that.

Just use proxy servers.

@Davebo:

Indeed. In any case, it’s clear that online polls are easily manipulated.

The online polling we’re talking about is somewhat more sophisticated than that. Panelists are given codes unique to them that can only be used once to access the survey, apparently regardless of whether or not someone tries to use different computers with different IP addresses or proxy servers.

It’s important to understand that we’re not talking about the kind of “polls” that news organizations throw up on their website after a debate, or that anyone can create using a number of “Create Your Own Poll” websites.

Recently, got a letter from Gallup with a one time ID number asking me to do an online poll. Much better than having some one read to me and I could respond when the pots weren’t boiling over, the dog wanting in, the cat out while the church ladies are knocking on the front door

Since I use nomorobo, snail mail is only way for a pollster to reach me.

YouGov has an excellent track record with their polling, better than some traditional polling firms, so it doesn’t make sense to ignore their results. The rest of them still have some proving to do. As with all polling, it’s best to view it in the context of other polls and use the aggregate rather than focusing on any individual poll’s results.

And it’s best to ignore all polls until a few weeks before an actual election.